Product Design Projects

Recents

Bence's Starter Team

All Projects

Supervised Autonomy for Subsea Robots

B

100%

Jan 1, 2025

Supervised Autonomy for Subsea Robots

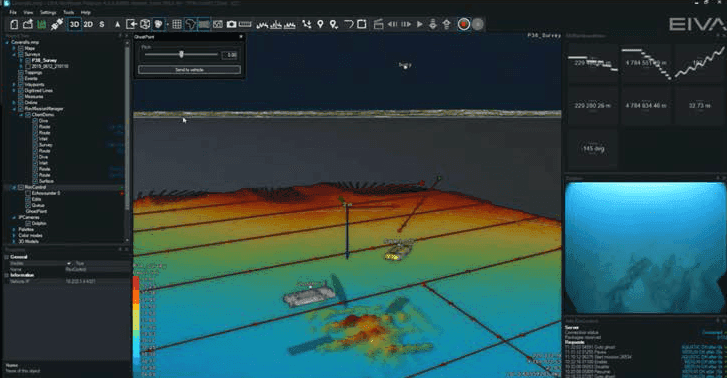

I worked on designing the user experience for supervising autonomous underwater robots used in offshore inspection and survey missions. The goal was to transform complex robotics systems into clear, trustworthy interfaces that allow operators to plan and supervise autonomous missions instead of manually piloting vehicles. The platform combines AI perception, navigation, and robotics control to enable progressive levels of autonomy in subsea operations.

Category

Digital Product Design

Reading Time

10 Min

Date

The Challenge

Traditional subsea operations rely on manual control of remotely operated vehicles (ROVs).

Pilots must simultaneously:

monitor multiple sensor feeds

manually control vehicle movement

interpret complex subsea environments

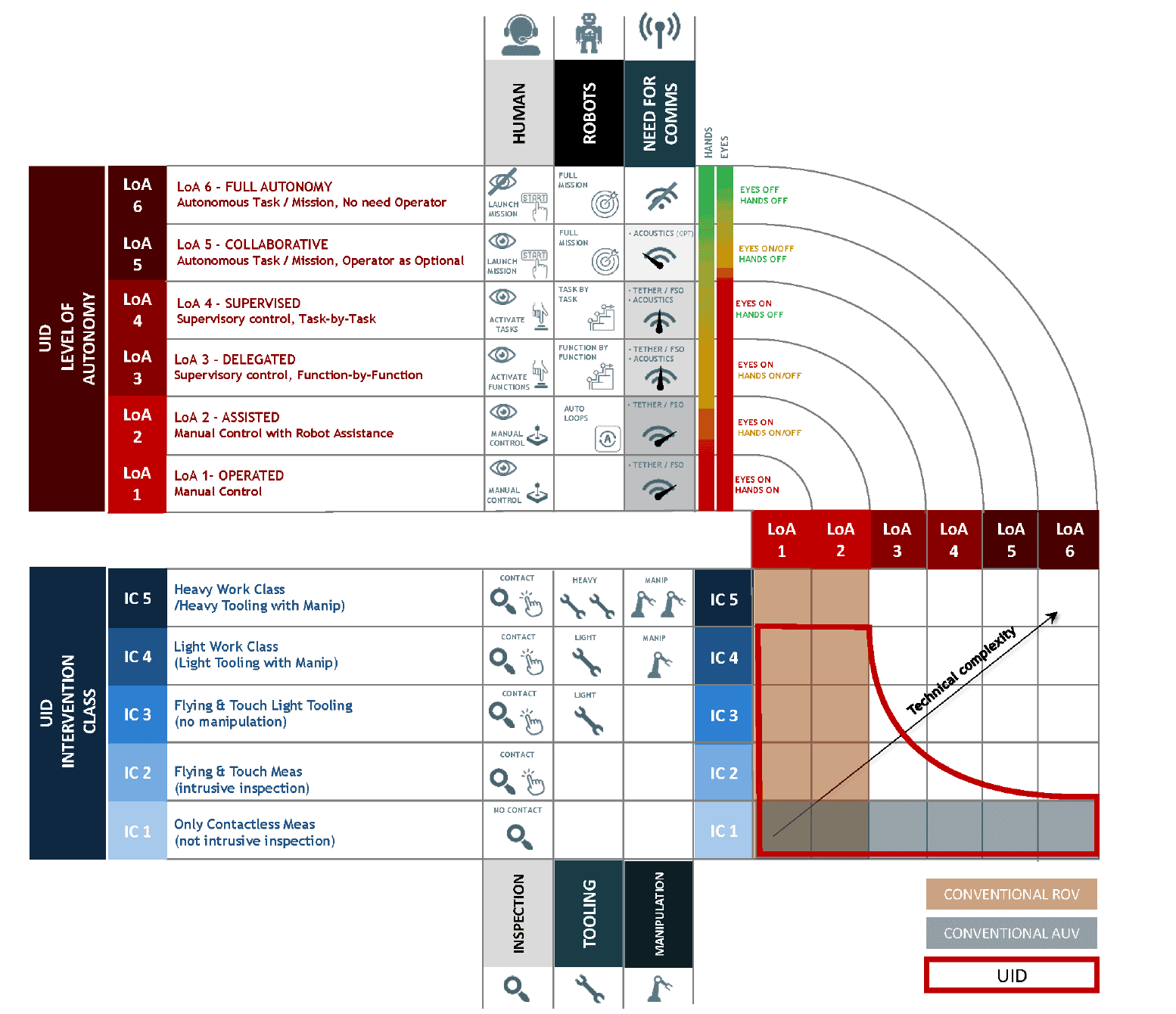

As autonomy becomes possible, the operator’s role shifts from pilot → mission supervisor.

This creates a major UX challenge:

How do we design interfaces that allow humans to trust and supervise autonomous robots operating in complex environments?

My Role

Product Designer — Autonomy Systems

I worked closely with product managers, robotics engineers, and domain experts to translate autonomy technology into usable workflows.

My work included:

defining the UX vision for autonomy

designing mission planning and monitoring workflows

facilitating cross-team workshops

aligning UX with robotics architecture and product strategy

documenting the mission management UX for the autonomy platform

Understanding the System

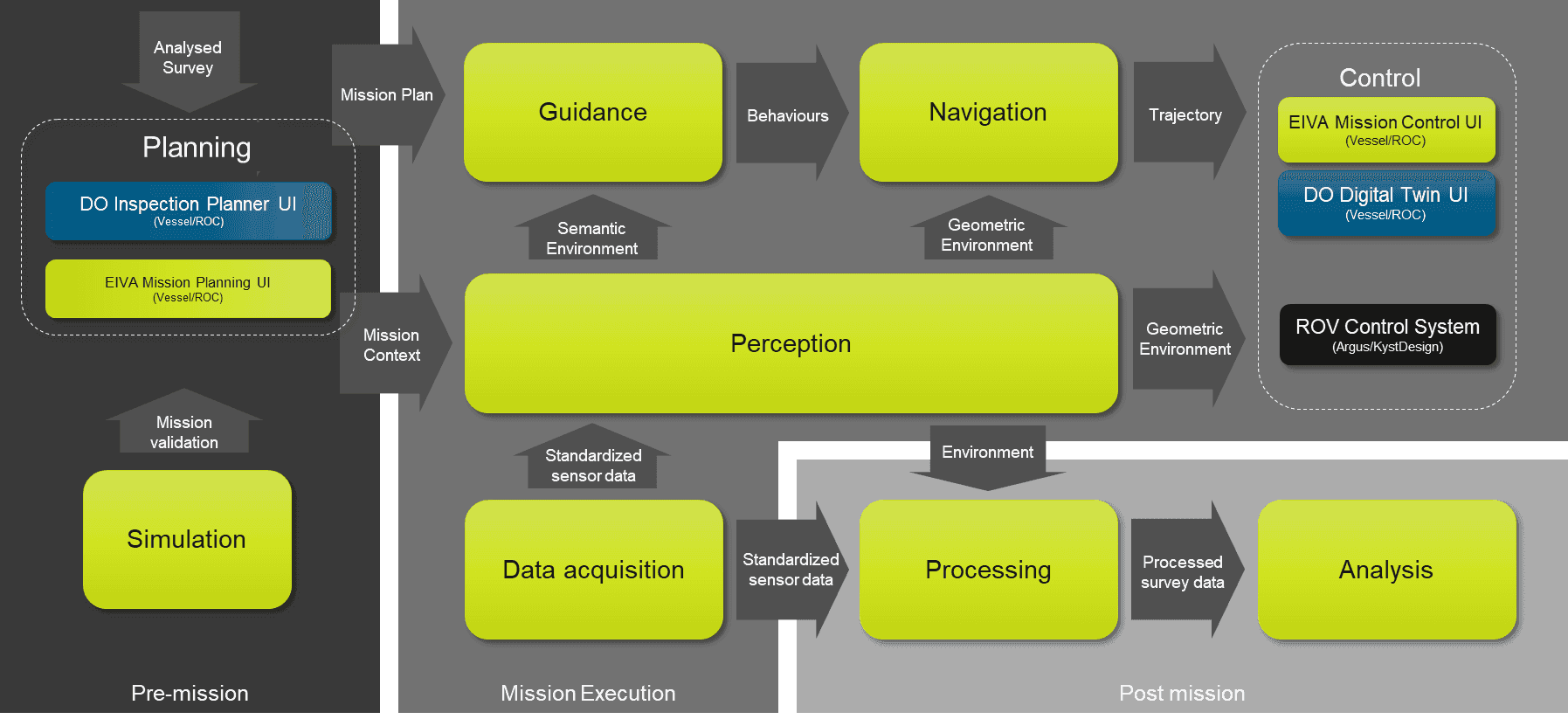

The autonomy system is built around four core components:

Perception

Sensors and AI map the environment and estimate the robot’s position. Through SLAM (Simultaneous Localization and Mapping) the system continuously estimates its position and builds a map of the environment.Guidance

The decision engine that selects behaviours based on mission goals. It executes missions using behaviour trees, selecting actions based on the mission goal and sensor data.Navigation

Converts mission logic into vehicle movementVehicle Interface

Connects the autonomy system to the robot’s control system.

Understanding this architecture allowed me to design interfaces that expose system logic without overwhelming the operator.

The roadmap aims to reach Level 4 supervised autonomy, where robots execute missions while humans supervise and intervene only when necessary.

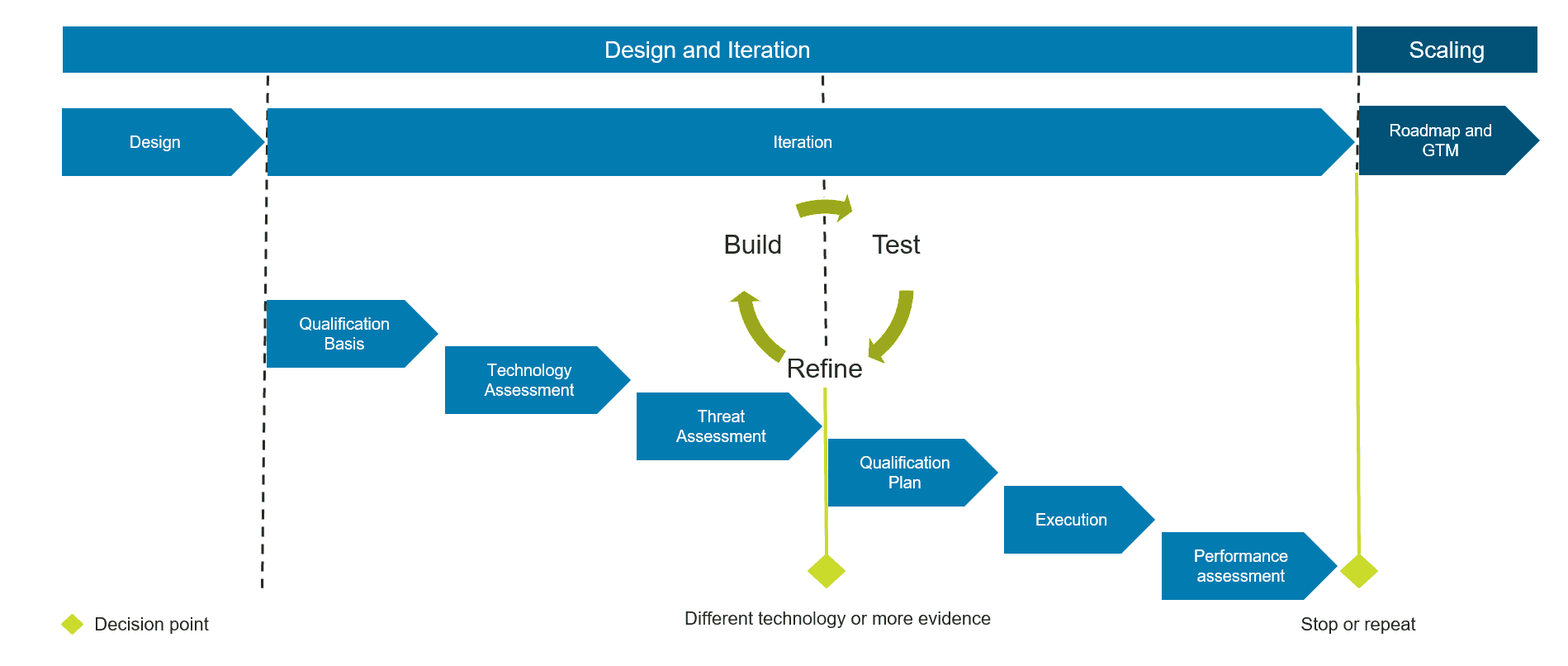

Process

UX Vision

The UX strategy focused on reducing cognitive load while maintaining human oversight.

Key principles:

Mission-centric UI

Design around mission goals instead of system internals.

Transparency

Operators should always know what the robot is doing and why.

Human-in-the-loop autonomy

Operators supervise missions and can intervene anytime.

Progressive complexity

Simple tasks are easy to start, advanced control remains available.

Designing Mission Management (NDA)

A key part of my work was defining the Mission Management System.

This interface allows operators to:

plan inspection missions

monitor robot behaviour

understand system decisions

intervene when necessary

The system connects mission planning, execution, monitoring, and post-mission analysis into one workflow.

Mission Planning

Operators define missions using reusable behaviour blocks such as:

go to waypoint

follow pipeline

maintain distance from asset

inspect structure

re-inspect if quality is insufficient

These behaviours are composed into larger mission workflows.

This modular structure allows complex missions to be built without coding.

Policy-Driven Autonomy

A key concept I worked with was policy-based mission logic.

Instead of hardcoding behaviour, the system follows policies such as:

slow down near structures

maintain a minimum distance to assets

avoid restricted zones

Policies allow missions to adapt dynamically to the environment while maintaining safety.

Mission Monitoring

Another key UX challenge was making autonomy observable.

Operators must be able to answer questions like:

Where is the robot?

What is it doing right now?

What will it do next?

Why did it change behaviour?

The monitoring interface therefore exposes:

robot location and trajectory

active mission behaviour

system state and sensor confidence

alerts and policy triggers

This creates trust and situational awareness, which are critical when supervising autonomous robots.

Impact

The work contributed to the foundation of the EIVA Autonomy System, enabling:

supervised robotic inspection missions

perception-driven navigation

scalable autonomy across subsea robots

The roadmap aims to reach Level 4 supervised autonomy, where robots execute missions while humans supervise and intervene only when necessary.